Executive's Guide to Evaluating Scalability

For vendors too

There’s no deterministic way of measuring a tool’s in-production scalability. You can only really see how it behaves in production when it’s… in production. In the sales/buying cycle, you will get a lot of jargon, numbers, and testimonials thrown at you by vendors, claiming that their solution can scale up and out with no problem whatsoever.

I write this first and foremost to give senior folks the best tools I found to guesstimate a product’s ability to scale, as well as a list of gotchas, caveats, and sweet nothings.

I also welcome any readers on the vendor side whose current marketing around scalability boils down to “we’re cloud-native so our solution is infinitely scalable.”

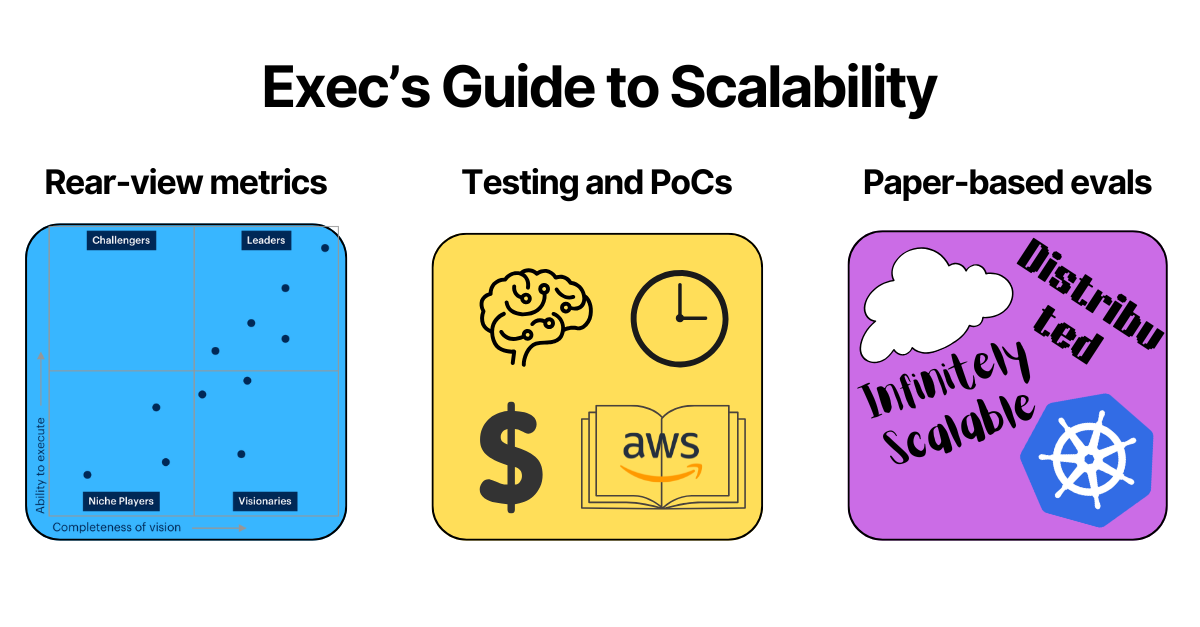

You can try to forecast scalability in three ways:

Rear-view metrics

Test environments

Paper-based exercises

Between all three, you will have a very good estimation as to whether the tool can scale. Chances are that you won’t have all three, but you can still have a good estimation with one or two.

I will spend most time on the paper-based exercises as it’s the most convoluted one in marketing literature.

Rear-view metrics

This makes up to 50% of any of Gartner’s MQs, i.e. the ability to execute. You can make the assumption that if a vendor successfully scaled up for a peer or competitor, then it can also scale up in your environment. It’s better to have peers. If you’re a tier 1 telco, and the solution you’re evaluating is being used by other tier 1 telcos, there are some clear one-to-one mappings.

Some questions you can ask the vendors with regards to this include:

Largest deployment currently in-production. Quantify it however you find it applicable, like: number of hosts, data ingested per time frame, data storage, events per second, concurrent requests served, number of users, etc.

How many customers in the same business as us do you have?

How many F100/F500 customers do you have?

How many customers do you have in total?

While this is nice, it’s not great. Just because the solution works well in one environment, it doesn’t mean that it’s scalability is portable to another. A vendor’s largest deployment may be an unhappy customer or face performance issues. Large customer may have a small deployment, so you see the logo, but not the numbers.

Don’t choose a vendor over their customer base alone.

Test environments

This is the best objective indicator, but also the most difficult one to arrange. You can simulate scalability an a test environment in two ways - do your own test/PoC, or find a third-party’s test results.

Third-party tests are great at getting actual numbers in terms of maximum throughput rates, concurrent user capacity, response time distributions under various loads, and resource consumption patterns (CPU, memory, network, storage). Some results can give you a clear objective differentiation between two or more tools.

If you do your own testing, you’ve got a long list of prerequisites before getting any useful data. You must first be able to define success metrics, have people who both understand your environment and that can run meaningful tests, you can develop a clear roll-out plan

For both first- and third-party tests, the big limitations are – (1) you don’t know about edge cases, and (2) the tests doesn’t take into account a dynamic environment.

But if you invest the time, money, and human capital, these will give you the best estimation for scalability.

Paper-based exercises

You can infer a product’s ability to scale by looking at how it’s architected. You can infer how it’s architected by looking at the vendor’s technical docs, downloadable assets, and also by asking.

I found that this is also where you can come across senseless jargon, so below are some things that are common across most enterprise IT products, especially cloud-based ones.

Kubernetes-based Microservices Architecture

This is one of the biggest gotchas I've come across. The architecture can scale, but it's also really hard to manage. For the Netflixes and Ubers who offer as-a-service products consumed via mobile apps or web portals with a limited number of services exposed to end customers, this makes complete sense.

The moment you have to deploy, run, and integrate these in your environment, it's another story. These microservices-based solutions need to run somewhere, either on-premises or in the cloud, on top of VMs or containers. The go-to choice (especially when you hear K8s-based) is containers running in cloud environments.

Each microservice is responsible for a function (say data ingestion or analytics) and would be packaged into its own container. Each of these containers carries the binaries for their specific function, and you need to run all the containers that make up your application.

Compared to installing a single monolithic application, you now need to deploy a bunch of containers that subsequently need to be managed via Kubernetes.

Recommendation: If the K8s-based microservices product you're buying comes with a SaaS option, take it. If you need to self-host the microservices architecture, make sure you have skilled engineers and robust DevOps practices.

Cloud-native Considerations

Cloud-native does not mean inherently mean scalable!

Consider an architecture where the product is either a cloud-hosted monolith or formed of tightly coupled microservices with shared state and synchronous communication patterns. Where a single database instances or poorly partitioned data stores will likely act as a bottleneck. Scaling depends on some fellow writing a script or navigating a hyperscaler GUI to spin up another VM. Processing data post-ingestion entails redundant computational overhead that grows linearly with data volume.

There’s a very long list of bad cloud architectural patterns.

Pro Tip - The AWS Well-Architected framework is a great resource to evaluate vendor’s architectures against.

Super Pro Tip - Ask LLMs to compare vendor’s technical docs against frameworks like AWS’s.

I do not expect buyers to do a deep-dive in a product’s architecture. But, you can listen whether the vendor is saying the right things, which can include:

Workloads are distributed across multiple stateless microservices that communicate asynchronously through event streams and message queues.

Canary Deployments to gradually roll out new deployments to a small subset of traffic before full deployment to monitor real-world performance, identify issues, and validate scalability improvements with minimal risk exposure.

Data is stored in sharded databases or distributed systems with intelligent caching layers

Application logic operates independently of server-side state, making every instance functionally identical and interchangeable.

Session data, user preferences, and application state are externalized to scalable stores like Redis clusters or distributed databases rather than being tied to specific server instances.

Event-Driven architecture have components interact via events and messages rather than direct synchronous calls.

Bulkhead patterns implement resource isolation to prevent any single component from exhausting shared resources.

Circuit breakers to prevent cascading failures by temporarily stopping requests to failing services.

Automatic roll-back mechanisms that can detect failed deployments and revert to previous stable versions without service interruption.

Multitenancy

Note that multitenancy does not mean multiple individual deployments. True multitenancy represents one deployment serving multiple tenants, even if distributed across multiple geographical regions.

If you're looking for a product that supports multitenancy, evaluate these architectural decisions:

Infrastructure-level vs logical isolation: Some solutions deploy dedicated physical instances per tenant while sharing management interfaces, while others use shared infrastructure with tenants running in isolated compute instances.

Data segregation approaches: Leading solutions implement either database-level separation (separate schemas/databases per tenant) or application-level separation (shared tables with tenant identifiers and strict access controls).

Role-based access controls: hierarchical permission systems that can enforce tenant boundaries while allowing flexible user management within each tenant.

Cross-tenant reporting capabilities: Aggregated reporting and analytics across multiple tenants while maintaining strict data isolation and privacy controls.

Noisy neighbors: Scalable multitenant systems implement resource quotas, rate limiting, and workload isolation to prevent individual tenants from impacting others' performance.

Economic Scalability

A product may be technically scalable, but can seriously price you out if you do scale it. Remember the $65M Datadog bill that got on the Coinbase finance team’s desk?

To prevent such a fiasco, you can consider the following:

Rate limits and throttles artificially impose limits in consumption-based models to prevent costs spinning out of control.

Greenfield large deployments (i.e. no need to slowly scale up) are better off with fixed-cost models, such as asset-based or user-based.

Some pricing models are subject to optimization. For example, ingest-based pricing can be much cheaper if you can process (filter, deduplicate, etc) data pre-ingestion. Pretty much the whole Cribl spiel.

Pass-through cost are particularly problematic for cloud-native and cloud-only solutions. AWS is already expensive. Vercel (built on AWS) is even more expensive. Vendors that run their own infrastructure can be more pricing-scalable, but perhaps less scale-scalable.

Non-functional Scalability Factors

You may have a really good product that's scalable from all technical aspects, but if it's a 30-person team, chances are it won't have the capacity to service large deployments effectively.

In those instances, the scalability requirements may fall on the internal team. So, to support in-house engineers scale the solution, the vendor can provide:

Comprehensive technical and API documentation

Multi-tier help desk with appropriate coverage (9-5, 24/7, follow-the-sun)

Drop-in and on-site support teams, especially for on-premises deployments

Service level agreements (e.g., resolving P1 incidents in under two hours)

Another non-functional aspect that can help a solution to scale up despite little direct support from the vendor consists of the vendor’s ecosystem. This would consist of partners such as:

Third-party managed services providers

Channels to market

Professional services providers

Technology alliances and integrations with commonly deployed technologies

Community support

Training and certification programs for partners